Month: May 2011

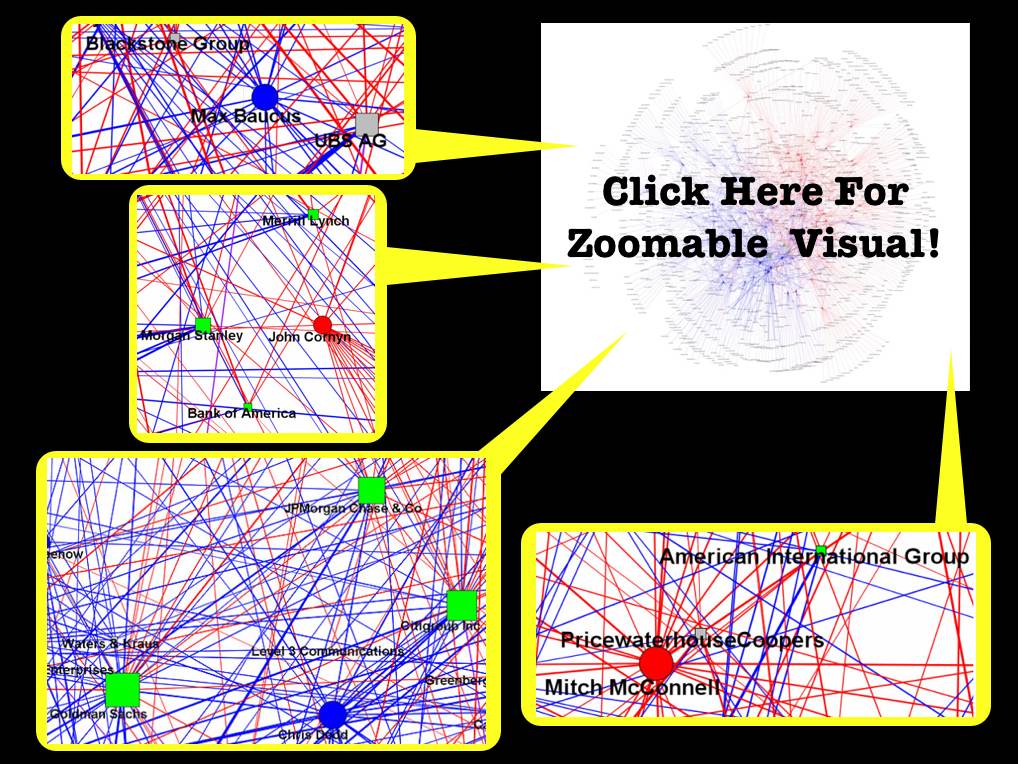

Senators of 110th Congress – A Perspective on the Campaign Finance Ecosystem [Repost]

Due to some behind the scenes technical difficulties, our series of posts on the campaign finance ecosystem of the 110th Congress have been unavailable. I am happy to report that we now have everything restored. We thought it would be nice to repost the visual above in light of recent decisions such as Citizens United v. Federal Election Commission. The Citizens United case has justifiably generated a significant amount of media / blogosphere coverage. For those not familiar with the Court’s decision, there is a full roundup of analysis available at SCOTUS Blog and Election Law Blog. For those interested, our original post is offered here and the documentation for the network creation and data collection is here. Also, there are variety of other related posts related to the 110th Congress available under this tag.

Big Data: The Next Frontier for Innovation, Competition and Productivity [Via McKinsey Global Institute]

There is growing interest in “Big Data” – both within the academy and within the private sector. For example, consider several major review articles on the topic including “Big Data” from Nature, “The Data Deluge” from The Economist and “Dealing with Data” from Science.

Indeed, those interested should consult the proceedings/video from recent conferences such as Princeton CITP Big Data 2010, (where I presented on the Big Data and Law panel) GigaOM 2011 NYC, O’Reilly Strata 2011 Making Data Work Conference, etc. Summarizing some of these insights and providing new insights is a new report for the McKinsey Global Institute entitled Big Data: The Next Frontier for Innovation, Competition and Productivity. This report was the subject of a recent NY Times article New Ways to Exploit Raw Data May Bring Surge of Innovation, a Study Says. Here is one highlight from this article “McKinsey says the nation will also need 1.5 million more data-literate managers, whether retrained or hired. The report points to the need for a sweeping change in business to adapt a new way of managing and making decisions that relies more on data analysis. Managers, according to the McKinsey researchers, must grasp the principles of data analytics and be able to ask the right questions.”

Of course, here at Computational Legal Studies, we are interested in the potential of a Big Data revolution in both legal practice and in the scientific study of law and legal institutions. Several recent articles on the subject argue that a major reordering is — well — already underway. For example, Law’s Information Revolution (By Bruce H. Kobayashi & Larry Ribstein), The Practice of Law in the Era of ‘Big Data’ (By Nolan M. Goldberg and Micah W. Miller) and Computer Programming and the Law: A New Research Agenda (By Paul Ohm) highlight different elements of the broader question.

We hope to share additional thoughts on this topic in the months to come. In the meantime, I would highlight the slides from my recent presentation at the NELIC Conference at Berkeley Law. My brief talk was entitled Quantitative Legal Prediction and it is a preview of some of my thoughts on the changing market for legal services. Please stay tuned.

Computational Social Science Tutorial @ 2011 International Joint Conference on Neural Networks

Those of you interested in developing/increasing your computational social science skills should consider attending the 2011 International Joint Conference on Neural Networks where Peter Erdi (Kalamazoo College & Hungarian Academy of Sciences) will be administrating the very useful workshop displayed above. I have learned a tremendous amount from Peter Erdi and would thus recommend the workshop to you.

The Great Stagnation: Why Hasn’t Recent Technology Created More Jobs? [PBS Newshour]

As part of his continuing coverage of Making Sen$e of financial news, Paul Solman reports on why more good jobs haven’t been created in recent years. Can new technological innovations create widespread job growth as past generations have seen? Tyler Cowen (George Mason / Marginal Revolution) argues “there is an innovation drought, relative to the industrial revolutions of the past and to other countries today.” Erik Brynjolfsson (MIT Solan) counters “I’m an optimist about technological progress, but I’m not nearly as optimistic about our ability to keep up with it. We have got some real problems. I just want to make it clear that the problem is not stagnation. The problem is more serious in some ways, which is our basic human ability to keep up with technological progress. That problem is going to get worse and worse as technology speeds faster and faster.”

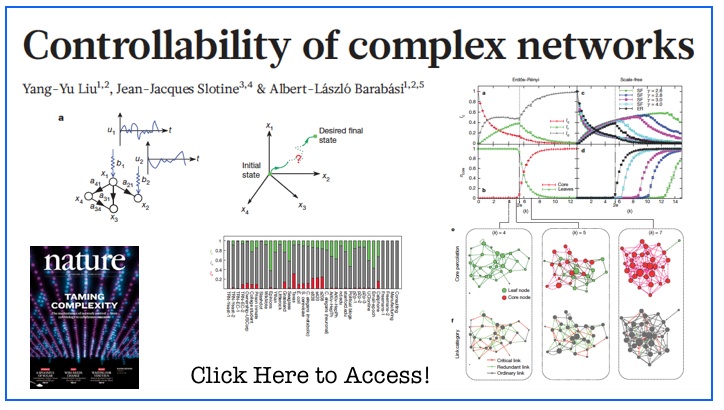

Controllability of Complex Networks [via Nature]

Abstract: “The ultimate proof of our understanding of natural or technological systems is reflected in our ability to control them. Although control theory offers mathematical tools for steering engineered and natural systems towards a desired state, a framework to control complex self-organized systems is lacking. Here we develop analytical tools to study the controllability of an arbitrary complex directed network, identifying the set of driver nodes with time-dependent control that can guide the system’s entire dynamics. We apply these tools to several real networks, finding that the number of driver nodes is determined mainly by the network’s degree distribution. We show that sparse inhomogeneous networks, which emerge in many real complex systems, are the most difficult to control, but that dense and homogeneous networks can be controlled using a few driver nodes. Counterintuitively, we find that in both model and real systems the driver nodes tend to avoid the high-degree nodes.”

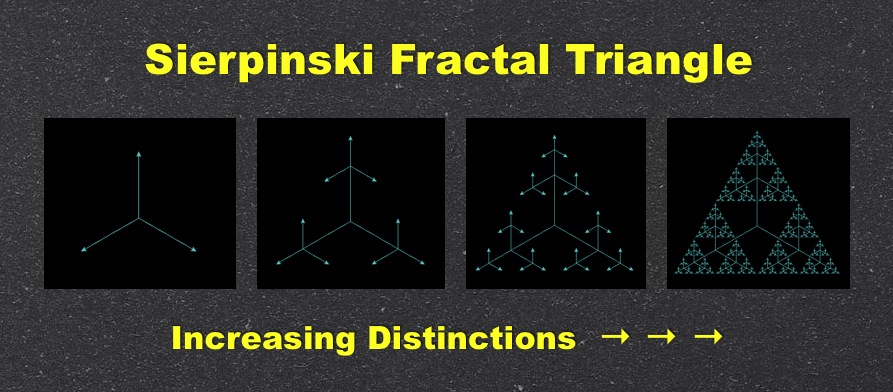

How Long is the Coastline of the Law: Additional Thoughts on the Fractal Nature of Legal Systems [Repost]

Do legal systems have physical properties? Considered in the aggregate, do the distinctions upon distinctions developed by common law judges self-organize in a manner that can be said to have definable physical property (at least at a broad level of abstraction)? The answer might lie in fractal geometry.

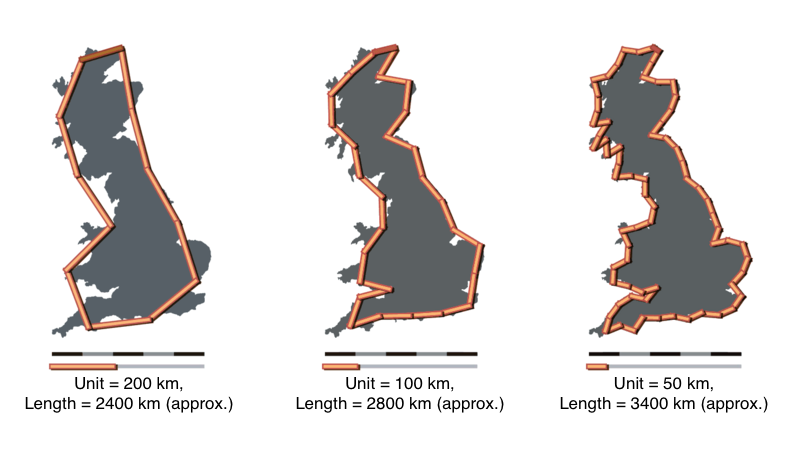

Fractal geometry was developed in a set of classic papers by the late mathematician Benoît Mandelbrot. The original paper in the field How Long is the Coastline of Britain describes the coastline measurement problem. In short form, the length of the coast line is a function of the size of measurement one employs. As shown below, as the unit of measurement decreases the length of the coastline increases. The ideas expressed in this and subsequent papers have been applied to a wide class of substantive questions. In particular, the application to economic systems has been particularly illuminating. Given recent economic events, we agree with views of the Everyday Economist arguing the applied economic theory built upon his work should have earned Mandelbrot a share of the Nobel Prize. [Check out Mandelbrot @ TED 2010]

A more abstract fractal is the simple version of the Sierpinski triangle displayed at the top of this post. Here, there exists self similarity at all levels. Specifically, at each iteration of the model, the triangles at the tip of each of the lines replicate into self similar versions of the original triangle. If you click on the visual above, you can run the applet (provided you have java installed on your computer). {Side note: those of you NKS Wolfram fans out there will know the Sierpinski triangle can be generated using cellular automata Rule 90.}

A more abstract fractal is the simple version of the Sierpinski triangle displayed at the top of this post. Here, there exists self similarity at all levels. Specifically, at each iteration of the model, the triangles at the tip of each of the lines replicate into self similar versions of the original triangle. If you click on the visual above, you can run the applet (provided you have java installed on your computer). {Side note: those of you NKS Wolfram fans out there will know the Sierpinski triangle can be generated using cellular automata Rule 90.}

For those who are interested in another demonstration consider the Koch Snowflake — a fractal which also offers a view of the relevant properties. The Koch Snowflake is a curve with infinite length (i.e. there is no convergence even though it is located in a bounded region around the original triangle). Click here to view an online demo of the Koch Snowflake.

So, you might be wondering … what is the law analog to fractals? As a first-order description of one important dynamic of the common law, we believe significant progress can be made by considering the conditions under which legal systems behave in a manner similar to fractals. For those interested, a number of important papers have discussed the fractal nature of legal systems. While discussing legal argumentation, the original idea is outlined in two important early papers The Crystalline Structure of Legal Thought and The Promise of Legal Semiotics both by Jack Balkin. The empirical case began more than ten years ago in the important paper How Long is the Coastline of the Law? Thoughts on the Fractal Nature of Legal Systems by David G. Post & Michael B. Eisen. It continues in more recent scholarship such as The Web of the Law by Thomas Smith.

In our view, the utility of this research is not to adjudicate the common law to be a fractal. Indeed, there exist mechanisms which likely prevent legal systems from actually behaving as unbounded fractal. The purpose of the discussion is determine whether describing law as a fractal is a reasonable first-order description of at least one dynamic within this complex adaptive system. While full adjudication of these questions is still an area of active research, we highlight these ideas for their important potential contribution to positive legal theory.

One thing we want to flag is the important relationship between the power law distributions we discussed in these prior posts (here and here) and the original work of Benoît Mandelbrot. The mapping of the power law like properties displayed by the common law and its constitutive institutions is part of the larger empirical case for the fractal nature of legal systems. Building upon the prior work, in two recent papers, which are available on SSRN here and here, we mapped this property of self organization among two sets of legal elites — judges and law professors.

Dynamic Reconfiguration of Human Brain Networks during Learning [From PNAS]

Abstract: “Human learning is a complex phenomenon requiring flexibility to adapt existing brain function and precision in selecting new neurophysiological activities to drive desired behavior. These two attributes—flexibility and selection—must operate over multiple temporal scales as performance of a skill changes from being slow and challenging to being fast and automatic. Such selective adaptability is naturally provided by modular structure, which plays a critical role in evolution, development, and optimal network function. Using functional connectivity measurements of brain activity acquired from initial training through mastery of a simple motor skill, we investigate the role of modularity in human learning by identifying dynamic changes of modular organization spanning multiple temporal scales. Our results indicate that flexibility, which we measure by the allegiance of nodes to modules, in one experimental session predicts the relative amount of learning in a future session. We also develop a general statistical framework for the identification of modular architectures in evolving systems, which is broadly applicable to disciplines where network adaptability is crucial to the understanding of system performance.”

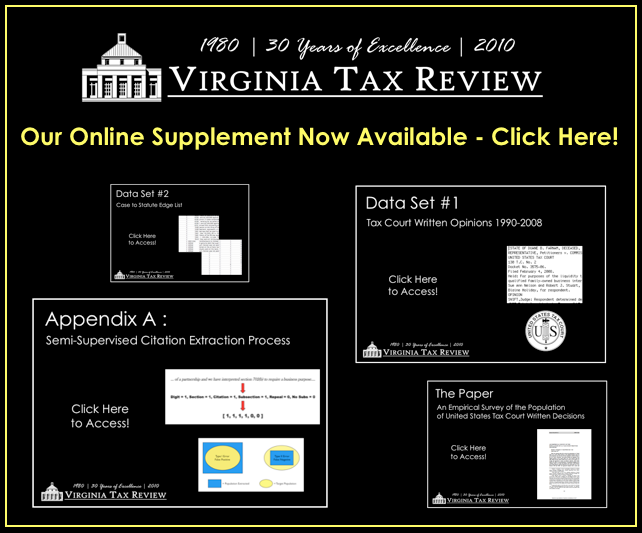

Bommarito, Katz & Isaacs-See –> Virginia Tax Review [ Online Supplement and Datasets ]

Our paper An Empirical Survey of the Population of United States Tax Court Written Decisions was recently published in the Virginia Tax Review. We have just placed supplementary materials online (click here or above to access).

Simply put, our paper is a “dataset paper.” While common in the social and physical sciences, there are far fewer (actually borderline zero) “dataset papers” in legal studies.

In our estimation, the goals of a “dataset paper” are three fold:

- (1) Introduce the data collection process with specific emphasis upon why the collection method was able to identify the targeted population

- (2) Highlight some questions that might be considered using this and other datasets

- (3) Make the data set available to various applied scholars who might like to use it

As subfields such as empirical legal studies mature (and in turn legal studies starts to look more like other scientific disciplines) it would be reasonable to expect to see additional papers of this variety. With the publication of the online supplement, we believe our paper has achieved each of these goals. Whether our efforts prove useful for others — well — only time will tell!