Month: November 2020

A Third Vaccine Success – Oxford University breakthrough on global COVID-19 vaccine

Very promising news — and now some of the key questions for 2021 …

What is the Venn between these candidate vaccines?

Hopefully it is not perfectly overlapping so a patient can take one vaccine if another vaccine proves to be ineffective.

Where is the testing regime to allow folks to explore the efficacy at the personal level?

While it is helpful to offer a characterization of the mean-field performance of a vaccine, we cannot expect folks to ‘get back to normal’ unless they have some personal assurance that the vaccine has actually worked for them.

How long does immunity last ?

This is still unknown. 6 months, 1 year, etc. Also, even if the vaccine ‘fails’ or wanes how much does it reduce the severity of COVID-19?

What about Children?

Trials for Children have yet to begin (or have only recently started). While Children appear to have had less issues with COVID-19 (perhaps because of exposure to other coronaviruses, etc.), there is still the question of how well the vaccine will perform on Children.

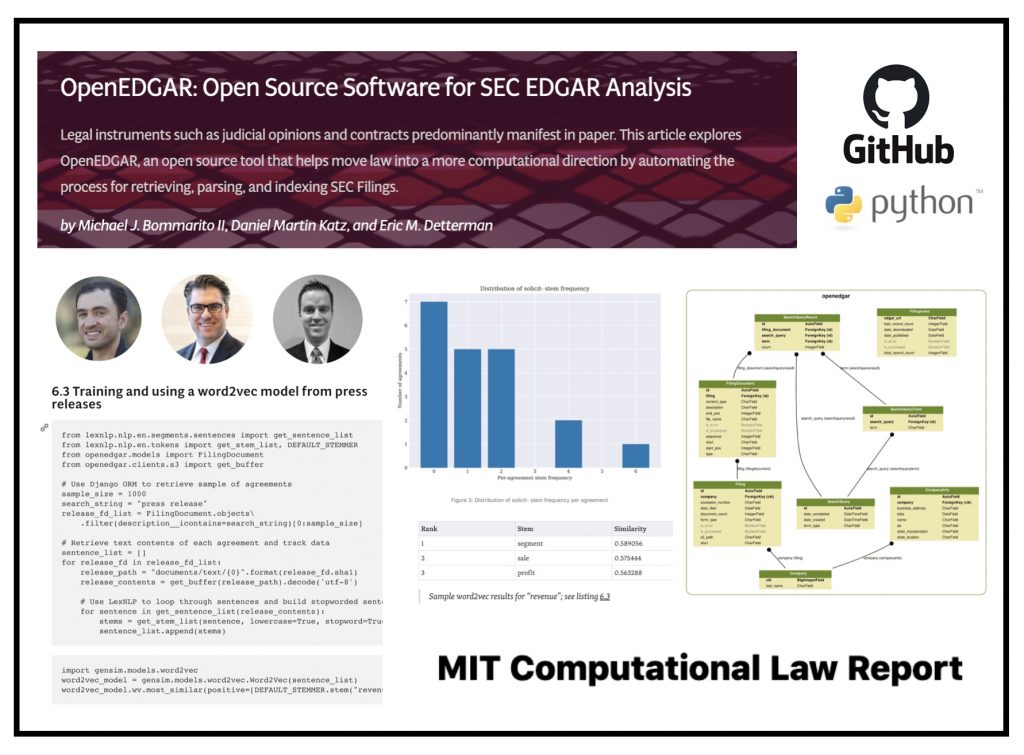

OpenEDGAR: Open Source Software for SEC EDGAR Analysis is published in MIT Computational Law Report

Today our Paper – “OpenEDGAR: Open Source Software for SEC EDGAR Analysis” was published in MIT Computational Law Report.

ABSTRACT: OpenEDGAR is an open source Python framework designed to rapidly construct research databases based on the Electronic Data Gathering, Analysis, and Retrieval (EDGAR) system operated by the US Securities and Exchange Commission (SEC). OpenEDGAR is built on the Django application framework, supports distributed compute across one or more servers, and includes functionality to (i) retrieve and parse index and filing data from EDGAR, (ii) build tables for key metadata like form type and filer, (iii) retrieve, parse, and update CIK to ticker and industry mappings, (iv) extract content and metadata from filing documents, and (v) search filing document contents. OpenEDGAR is designed for use in both academic research and industrial applications, and is distributed under MIT License at https://github.com/LexPredict/openedgar

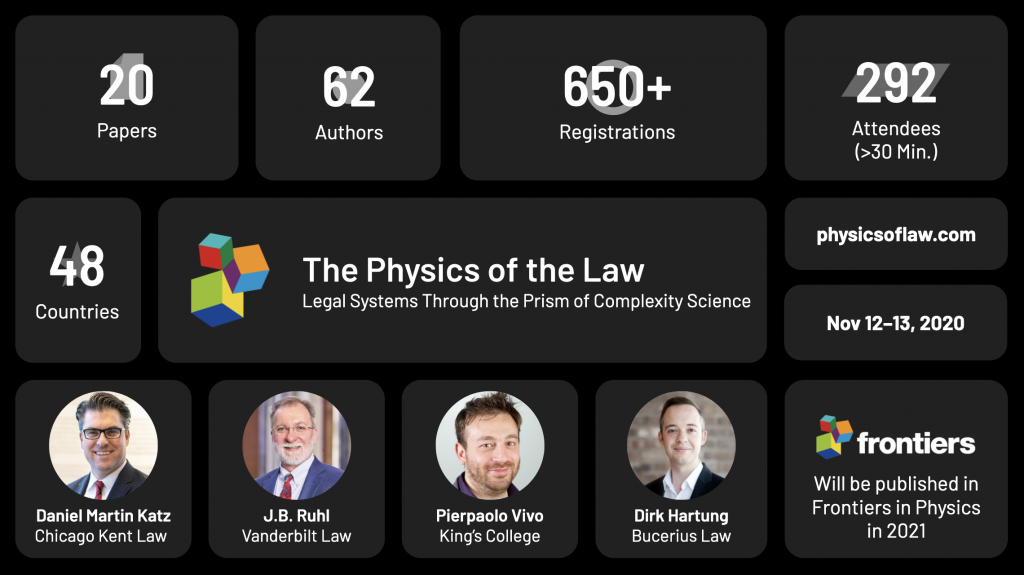

Final Data on the Physics of Law ONLINE Conference !

Thanks to everyone who attended The Physics of Law Virtual Conference earlier this month. Overall, we had 292+ Attendees from 48 Countries watch the presentation of 20 Academic Papers by 62 Authors. We saw a wide range of methods from Physics, Computer Science and Applied Mathematics devoted to the exploration of legal systems and their outputs.

Methodological approaches included Agent Based Modeling, Game Theory and other Formal Modeling, Dynamics of Acyclic Digraphs, Knowledge Graphs, Entropy of Legal Systems, Temporal Modeling of MultiGraphs, Information Diffusion, etc.

NLP Methods on display included traditional approaches such as TF-IDF, n-grams, entity identification and other metadata extraction as well as more advanced methods such as Bert, Word2Vec, GloVe, etc.

Methods were then applied to topics including Attorney Advocacy Networks, Statutory Outputs from Legislatures, various bodies of Regulations, Contracts, Patents, Shell Corporations, Common Law Systems, Legal Scholarship and Legal Rules ∩ Financial Systems.

If you have an eligible paper – it is not too late to submit – papers are due in January. After undergoing the Peer Review process — Look for the Final Papers to be published in Frontiers in Physics in 2021.

Day One of The Physics of Law ONLINE Conference

We are live at the Physics of Law Online Conference!

Over the next two days, we will have 20 Papers Presented from Scholars from Around the World …Click here to access the site so you can Sign Up for Day 2. If you would like to access the full agenda click here.

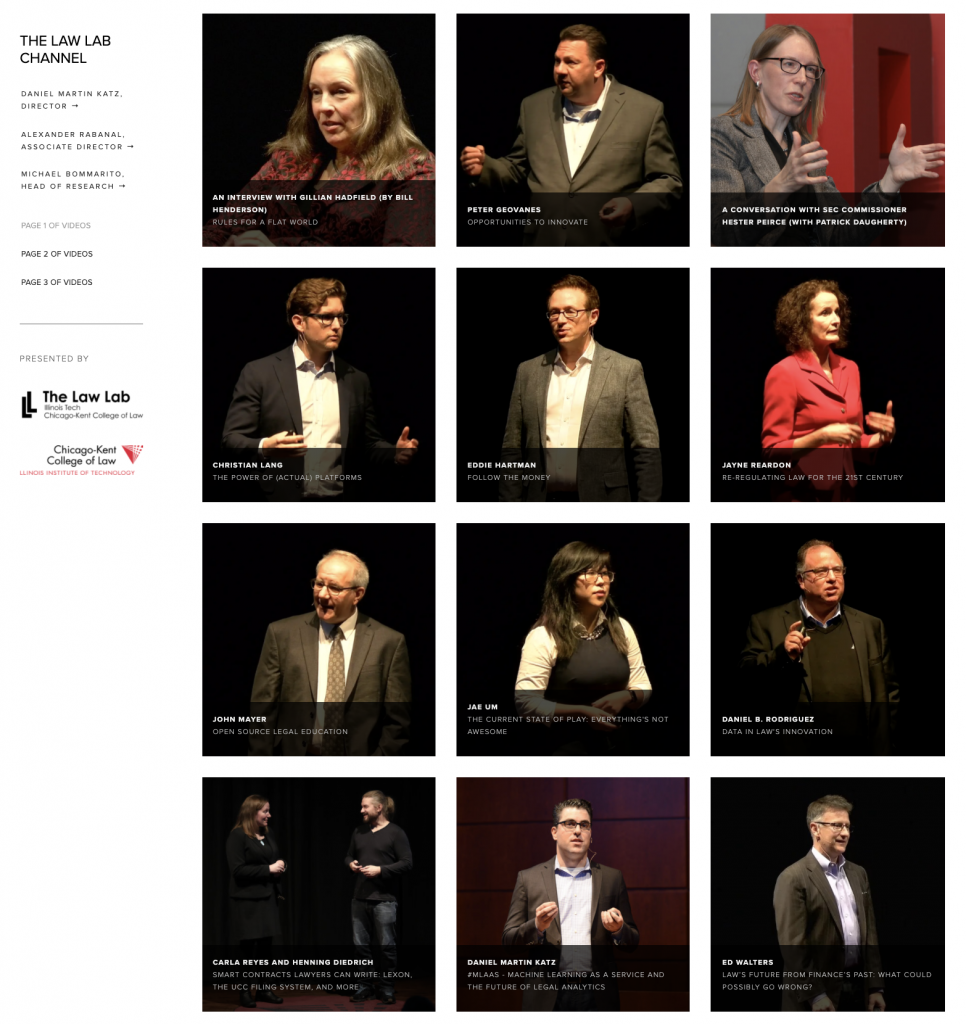

Over 100 Videos on Legal Innovation Now Available at TheLawLabChannel.com

Over the past few years, we have hosted a number of conferences devoted to various sub-topics in legal innovation including The Make Law Better Conference, Fin Legal Tech Conference and the Block Legal Tech Conference. We have aggregated videos from these events on TheLawLabChannel.com for you to enjoy at your convenience.

Fin (Legal) Tech – Bucerius Legal Technology Lecture Series (from 2017)

Lots has happened in the past three years in the Law ∩ Finance space … but here is my 2017 talk that I gave at Bucerius Law School.

Data Science & Machine Learning in Containers (or Ad Hoc vs Enterprise Grade Data Products)

As Mike Bommarito, Eric Detterman and I often discuss – one of the consistent themes in the Legal Tech / Legal Analytics space is the disconnect between what might be called ‘ad hoc’ data science and proper enterprise grade products / approaches (whether B2B or B2C). As part of the organizational maturity process, many organizations who decide that they must ‘get data driven’ start with an ad hoc approach to leveraging doing data science. Over time, it then becomes apparent that a more fundamental and robust undertaking is what is actually needed.

Similar dynamics also exist within the academy as well. Many of the code repos out there would not be considered proper production grade data science pipelines. Among other things, this makes deployment, replication and/or extension quite difficult.

Anyway, this blog post from Neptune.ai outlines just some of these issues.