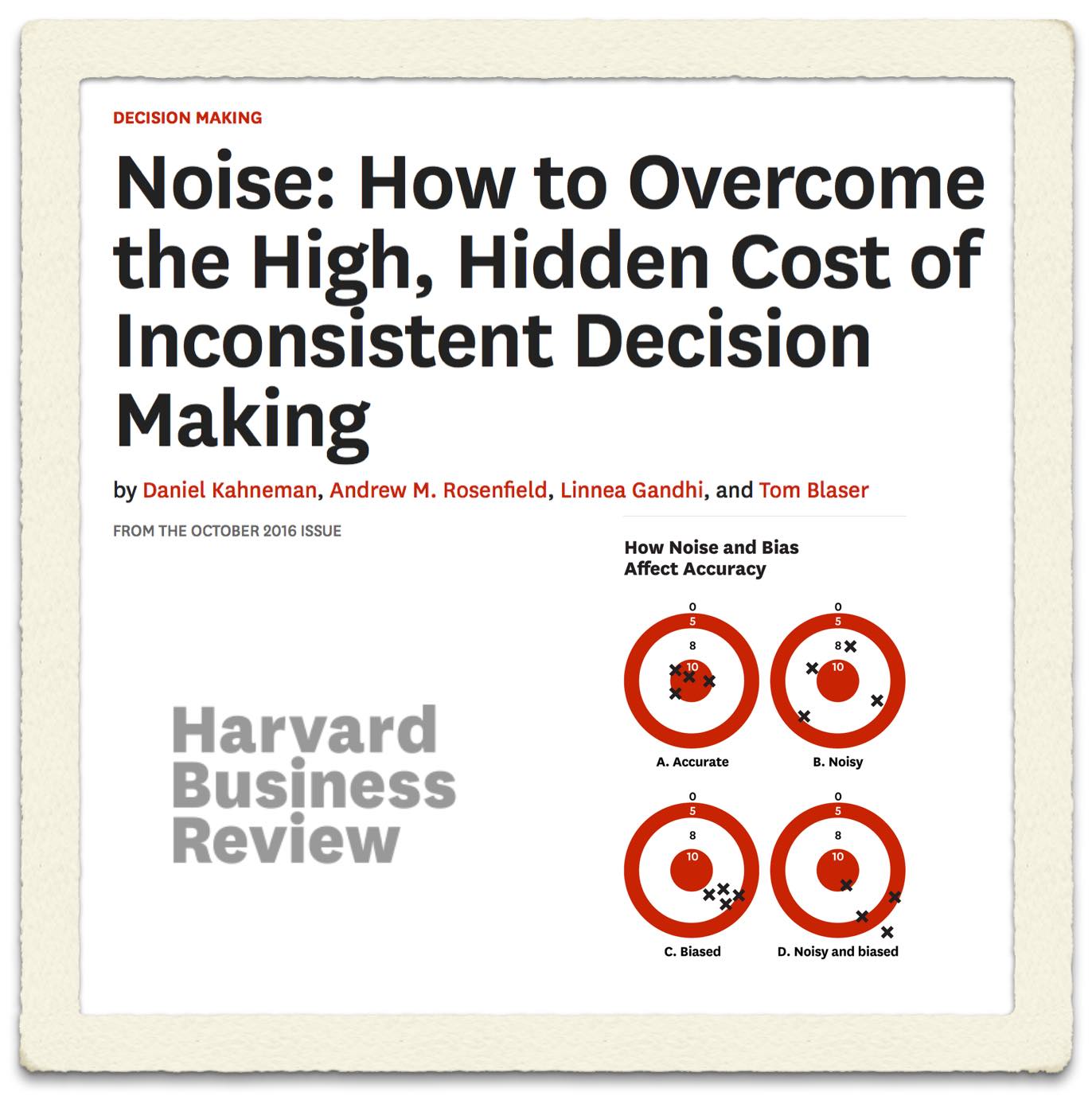

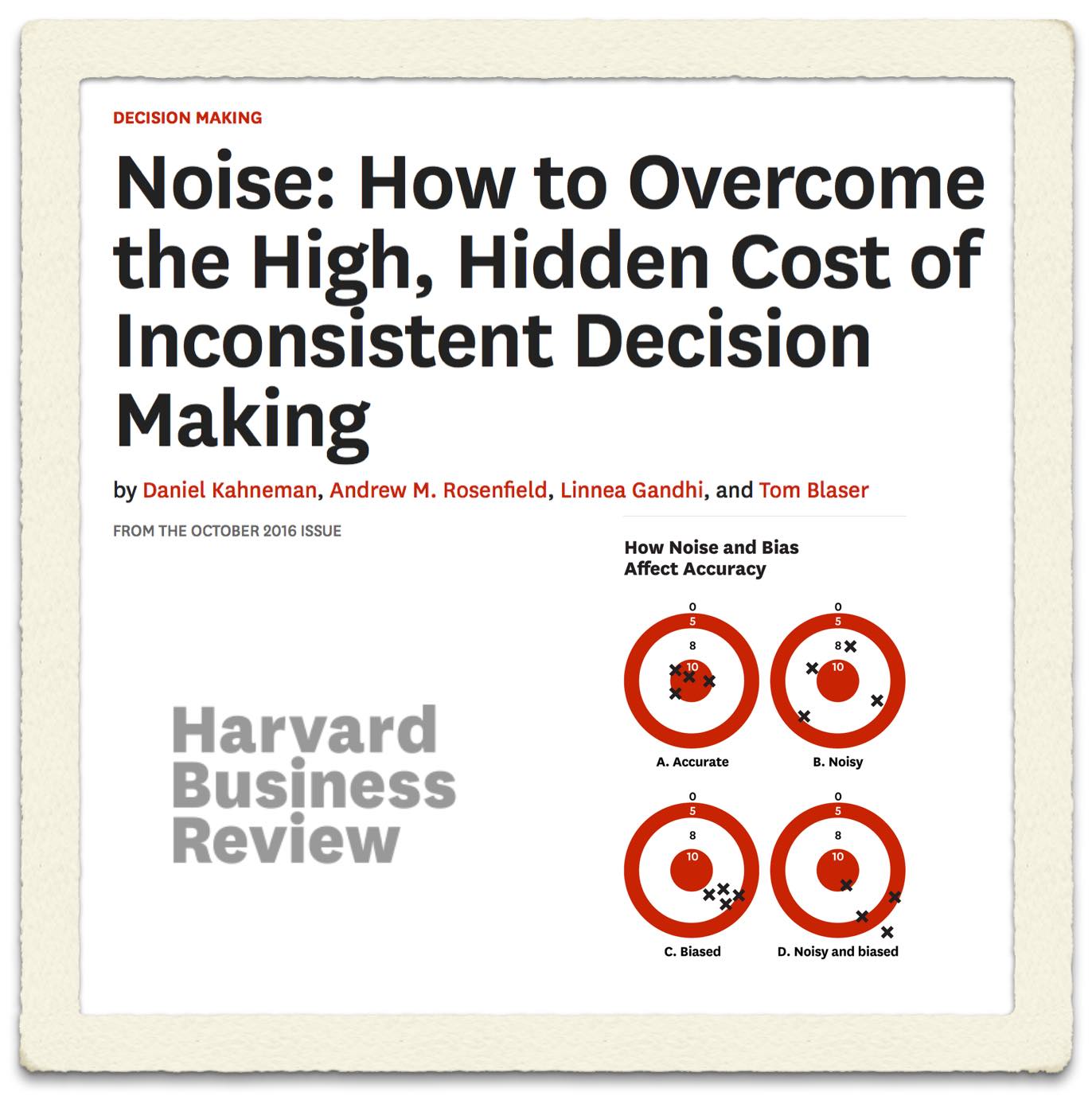

From the article: “The prevalence of noise has been demonstrated in several studies. Academic researchers have repeatedly confirmed that professionals often contradict their own prior judgments when given the same data on different occasions. For instance, when software developers were asked on two separate days to estimate the completion time for a given task, the hours they projected differed by 71%, on average. When pathologists made two assessments of the severity of biopsy results, the correlation between their ratings was only .61 (out of a perfect 1.0), indicating that they made inconsistent diagnoses quite frequently. Judgments made by different people are even more likely to diverge. Research has confirmed that in many tasks, experts’ decisions are highly variable: valuing stocks, appraising real estate,sentencing criminals, evaluating job performance, auditing financial statements, and more. The unavoidable conclusion is that professionals often make decisions that deviate significantly from those of their peers, from their own prior decisions, and from rules that they themselves claim to follow.”

From the article: “The prevalence of noise has been demonstrated in several studies. Academic researchers have repeatedly confirmed that professionals often contradict their own prior judgments when given the same data on different occasions. For instance, when software developers were asked on two separate days to estimate the completion time for a given task, the hours they projected differed by 71%, on average. When pathologists made two assessments of the severity of biopsy results, the correlation between their ratings was only .61 (out of a perfect 1.0), indicating that they made inconsistent diagnoses quite frequently. Judgments made by different people are even more likely to diverge. Research has confirmed that in many tasks, experts’ decisions are highly variable: valuing stocks, appraising real estate,sentencing criminals, evaluating job performance, auditing financial statements, and more. The unavoidable conclusion is that professionals often make decisions that deviate significantly from those of their peers, from their own prior decisions, and from rules that they themselves claim to follow.”

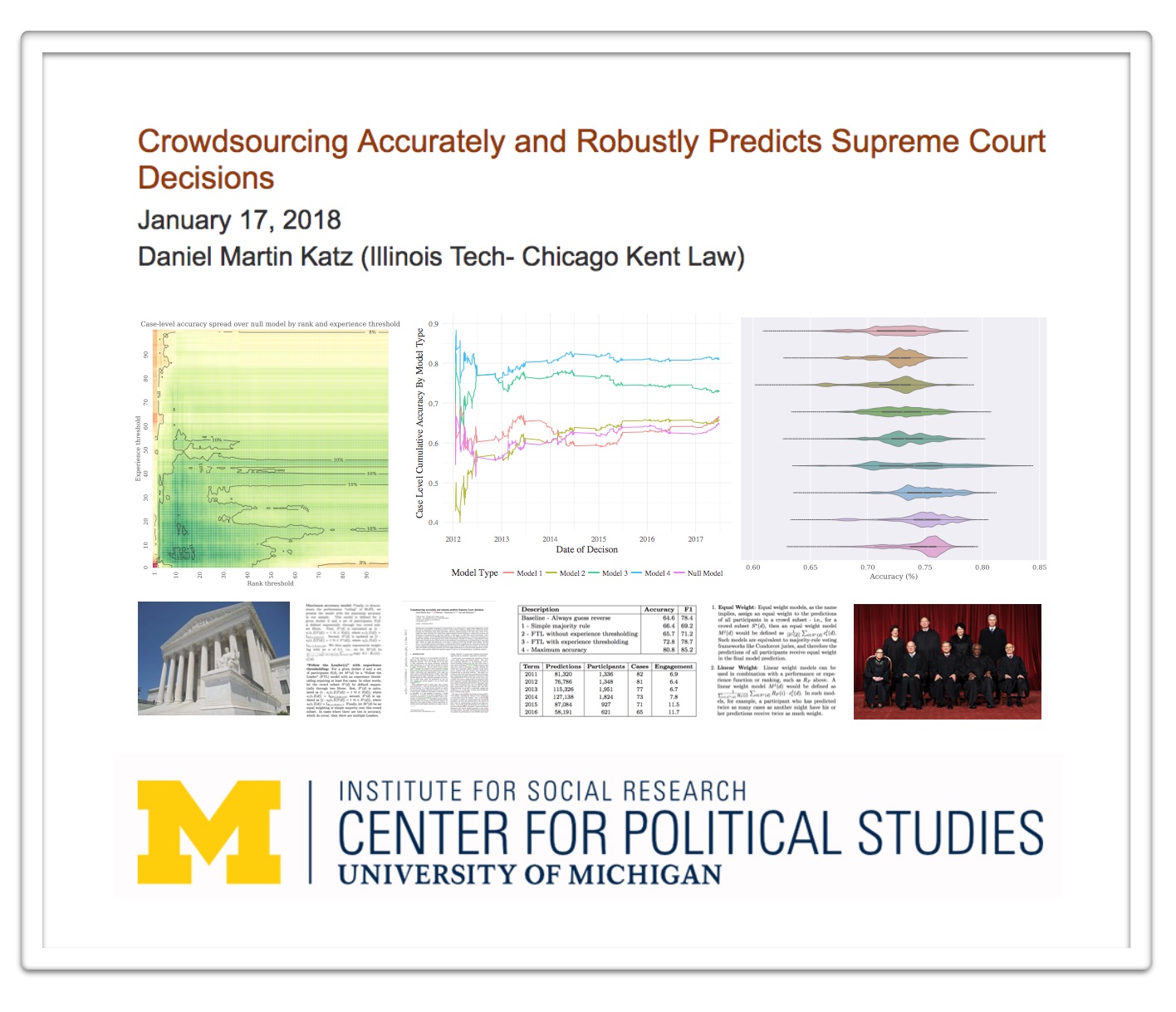

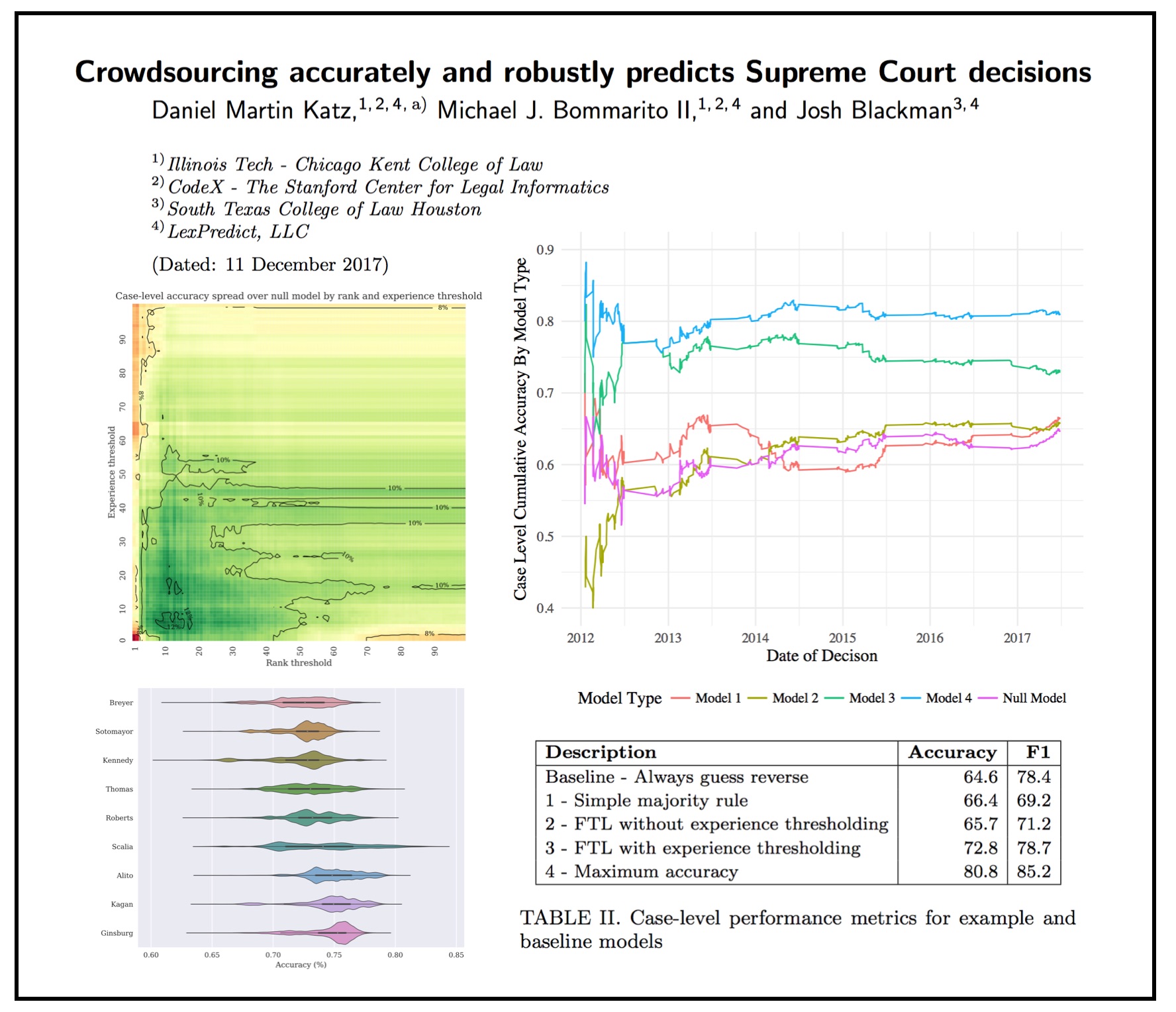

Suffice to say we at LexPredict agree. Indeed, building from our work on Fantasy SCOTUS where our expert crowd outperforms any known single alternative (including the highest ranked Fantasy SCOTUS player), we have recently launched LexSemble (our configurable crowdsourcing platform) in order to help legal and other related organizations make better decisions (in transactions, litigation, regulatory matters, etc.).

We are working to pilot with a number of industry partners interested in applying underwriting techniques to more rigorously support their decision making. This is also an example of what we have been calling Fin(Legal)Tech (the financialization of law). If you want to learn more please sign up for our Fin(Legal)Tech conference coming on November 4th in Chicago) (tickets are free but space is limited).