Tag: artificial intelligence and law

Legal Futures Conference – London @ The Royal Bank of Scotland – Nov. 19, 2012

On Monday, I will be speaking at the Legal Futures Conference at the Royal Bank of Scotland Offices in Central London. The topic is the “Cutting Edge of Law” and all of respective speakers can lay claim to that title (in one form or another). Thus, I am looking forward to an exciting day centered around the future of the legal services industry. Congrats to Neil Rose from The Guardian for organizing such a fantastic lineup!

The Rise of Legal Analytics [via BBJ]

HT: Bill Henderson @ The Legal Whiteboard.

In this vein, watch for my new paper “Quantitative Legal Prediction” (Forthcoming to SSRN Soon and to be Published in Emory Law Journal in 2013)

Partner Seeking Help On E-Discovery – or – Why it is a Good Idea to Learn Something About E-Discovery Before You Commit Malpractice

This semester here at Michigan State University College of Law, I am team teaching E-Discovery together with my colleague Adam Candeub. For a number of reasons, I enjoyed this video as it highlights the real gap in knowledge that exists between the tech infused Lawyer for the 21st Century and everyone else. The future belongs to the former and the time to acquire those skills is now!

Getting Serious About the Future of the Legal Services Industry : Restaurant Chains Have Managed to Combine Quality Control, Cost Control, and Innovation. Can Health Care { or for that matter Legal Services } ?

Just remember that the turmoil in the legal services industry offers both possibility and peril. At our ReInventLaw Lab we are all about the possibility …

Just remember that the turmoil in the legal services industry offers both possibility and peril. At our ReInventLaw Lab we are all about the possibility …

One of my last conversations with the late Larry Ribstein was about the very idea in this article … not applied to medicine but rather to law … What if the sort of processing engineering that gave rise to the CheeseCake Factory was a play in the delivery of legal services? Very solid food ( yes I know it is not 5 star dining ) but it is quite affordable for most folks on a Friday night 🙂

Suffice to say this (and legal information engineering) is where a significant of the growth (jobs) in the legal services industry will be located (as we showcased at our recent London event … and will do so at our upcoming Dubai and Silicon Valley events)

If you are a law student reading this post – please understand that you can be a leader in this space as it is still in its infancy. There are lots of law startups working in this and allied domains but it is highly unlikely that your law school can help you acquire the skills you need to play ball in this arena … If you want to do what is described above you need a mixture of skills (not just law) …

Here are the four pillars — { law + tech + design + delivery } and that is precisely what we are teaching in the ReInventLaw Lab and the Michigan State 21st Century Law Practice Summer Program in London. If you want to be part of the action … it is not too late … shoot me an email … daniel.martin.katz@gmail.com and will tell you how to get serious … because the time for action is now …

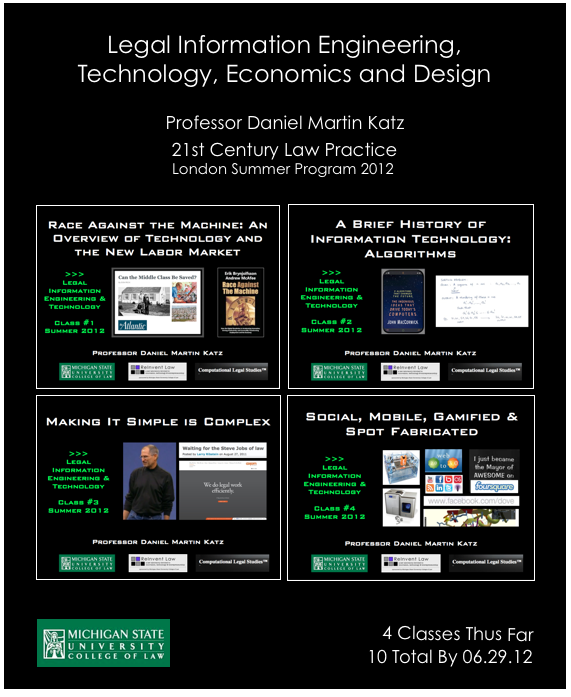

Legal Information Engineering & Technology (with Economics of Tech + Design) – Part of the MSU/Westminster – 21st Century Law Practice Summer Program London 2012

I will be posting materials from my 21st Century Law Practice London Summer Program Course to the course website here (or click on the image above).

I will be posting materials from my 21st Century Law Practice London Summer Program Course to the course website here (or click on the image above).

This 1 Credit Course is part of a total 3 credits in the summer program which also include 21st Century Law Practice (taught by Professor Renee Newman Knake @ MSU Law) and The Legal Services Act and UK Deregulation (taught by Professor Lisa Webley @Westminster Law & Professor John Flood @Westminster Law).

Students will finish this two weeks of intensive training in law, technology, innovation, (de)regulation by participating in the public LawTechCamp London 2012 which will take place on June 29, 2012 in Central London. All students will attend this event of industry leaders and several will present their ideas regarding the future of {law+tech}.