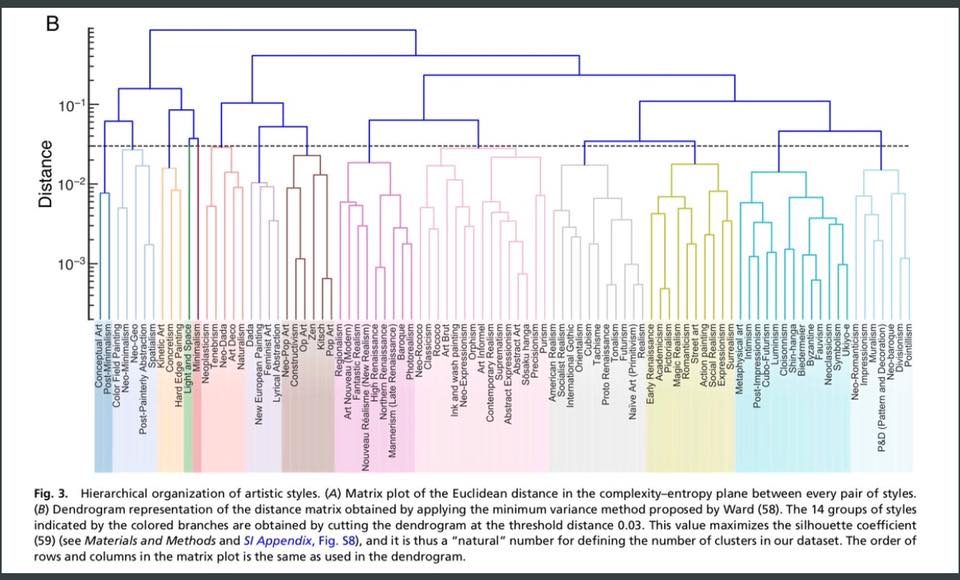

Very interesting paper in PNAS – “History of art paintings through the lens of entropy and complexity – Large-scale quantitative analysis of almost 140,000 paintings (a millennium of art history) estimates the permutation entropy and the statistical complexity of each painting.”

Tag: complex systems

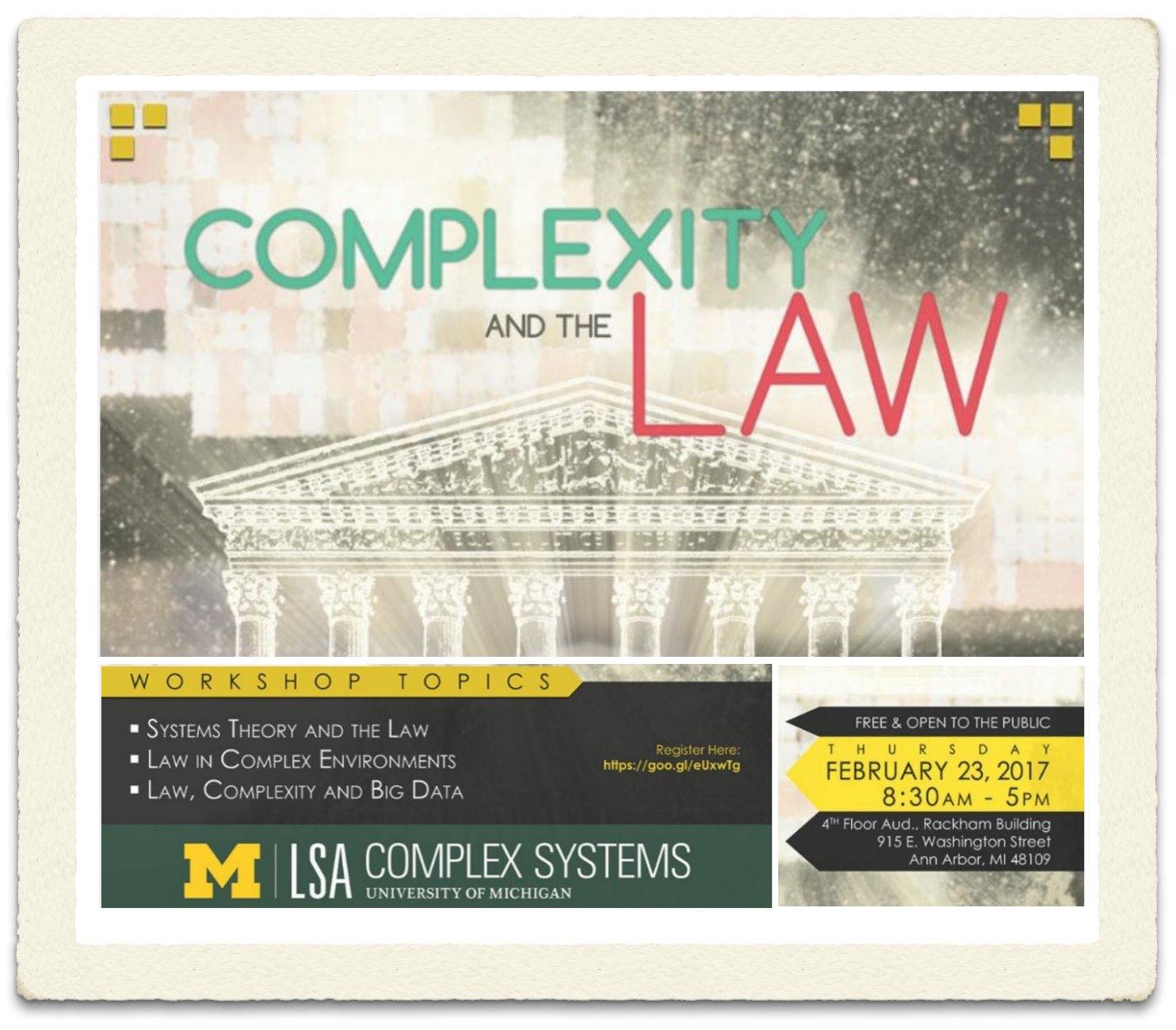

Workshop on Complexity and the Law (University of Michigan Center for the Study of Complex Systems)

I am very excited to be heading back to UM – Ann Arbor to speak at the Workshop on Law + Complex Systems. I am particularly interested given that my PhD thesis is called “Modeling Law as a Complex Adaptive System.”

I am very excited to be heading back to UM – Ann Arbor to speak at the Workshop on Law + Complex Systems. I am particularly interested given that my PhD thesis is called “Modeling Law as a Complex Adaptive System.”

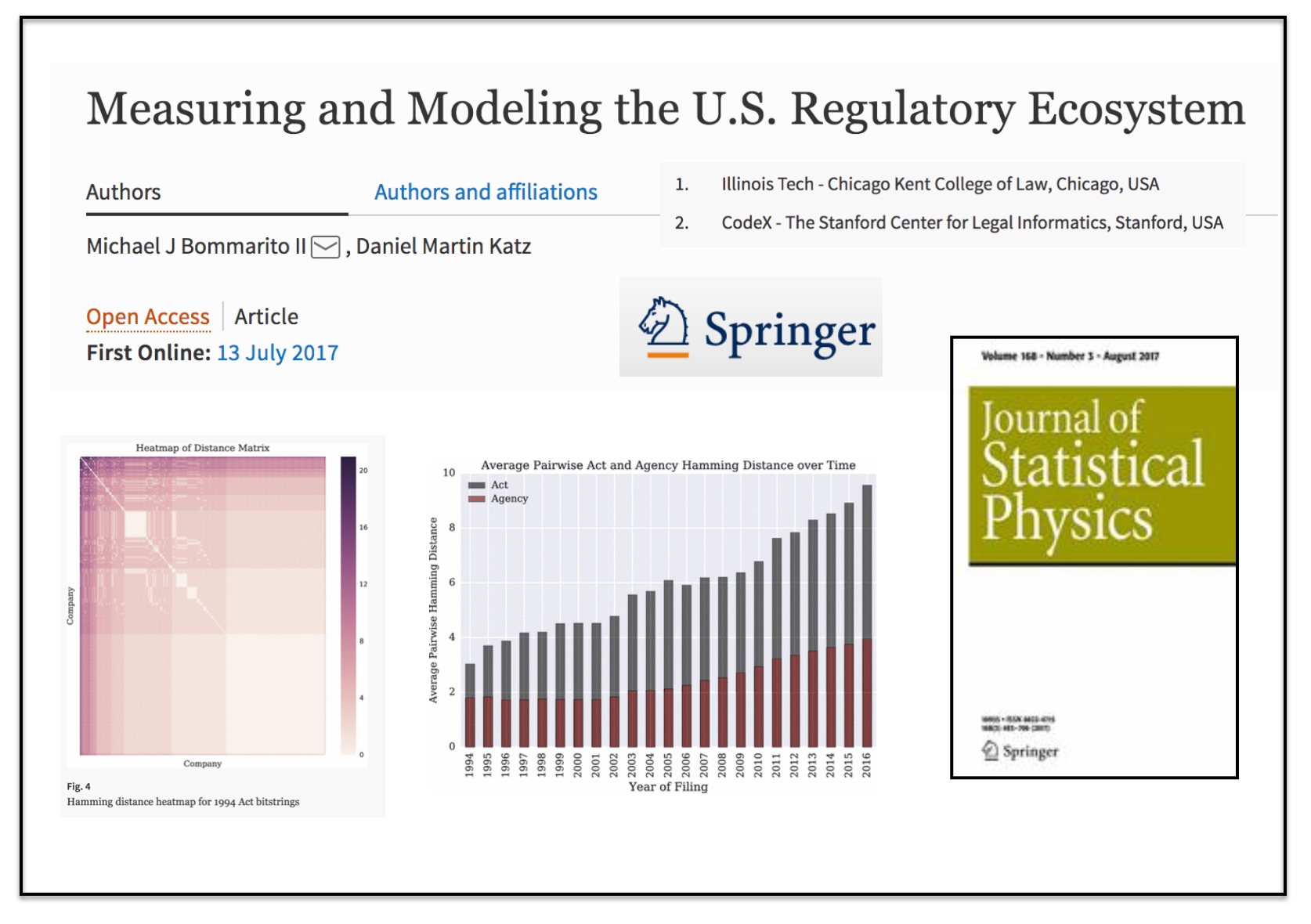

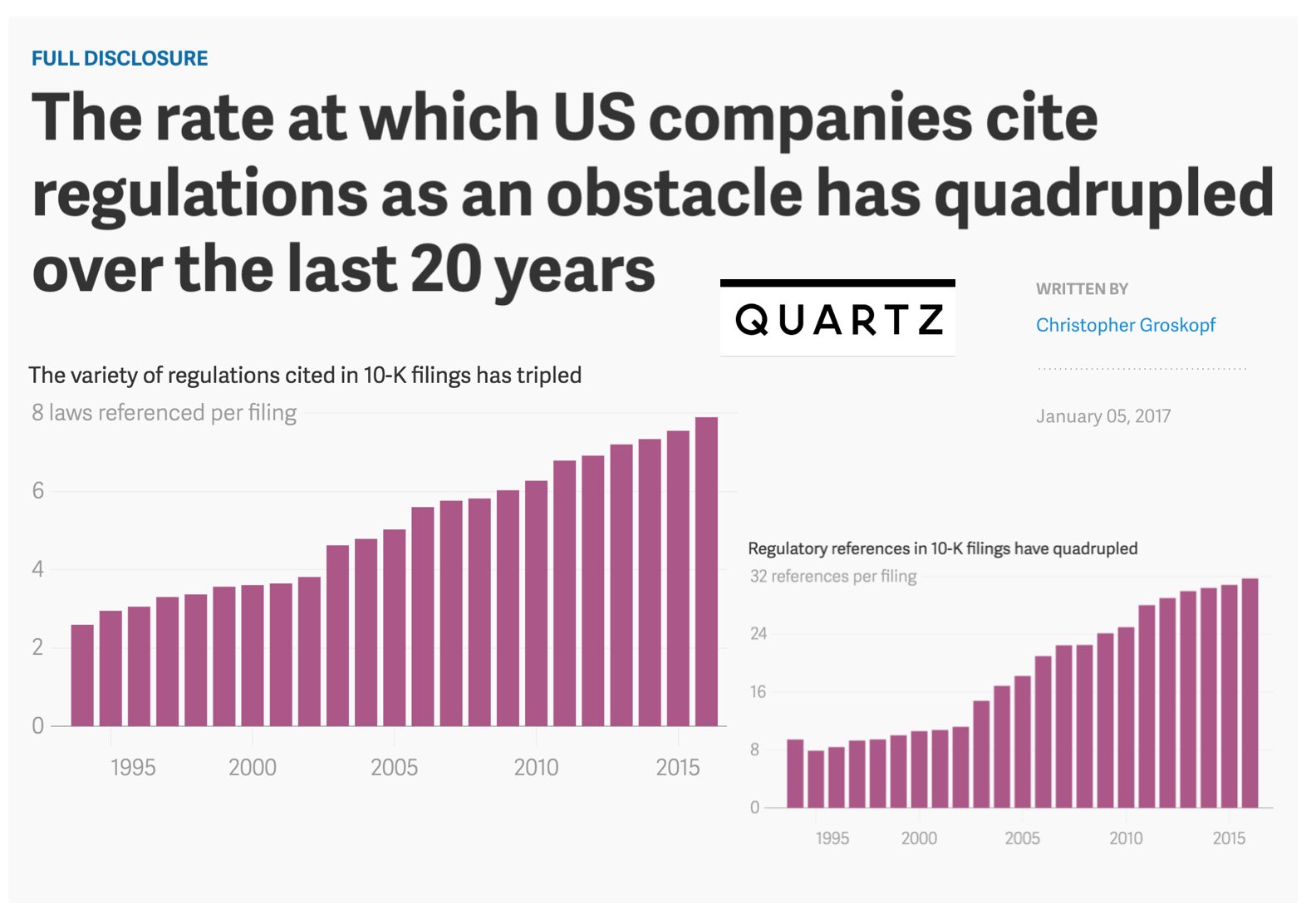

The rate at which US Companies cite regulations as an obstacle has quadrupled over the last 20 years (via Quartz)

“Michael Bommarito II and Daniel Martin Katz, legal scholars at the Illinois Institute of Technology, have tried to measure the growth of regulation by analyzing more than 160,000 corporate annual reports, or 10-K filings, at the US Securities and Exchange Commission. In a pre-print paper released Dec. 29, the authors find that the average number of regulatory references in any one filing increased from fewer than eight in 1995 to almost 32 in 2016. The average number of different laws cited in each filing more than doubled over the same period.”

“Michael Bommarito II and Daniel Martin Katz, legal scholars at the Illinois Institute of Technology, have tried to measure the growth of regulation by analyzing more than 160,000 corporate annual reports, or 10-K filings, at the US Securities and Exchange Commission. In a pre-print paper released Dec. 29, the authors find that the average number of regulatory references in any one filing increased from fewer than eight in 1995 to almost 32 in 2016. The average number of different laws cited in each filing more than doubled over the same period.”

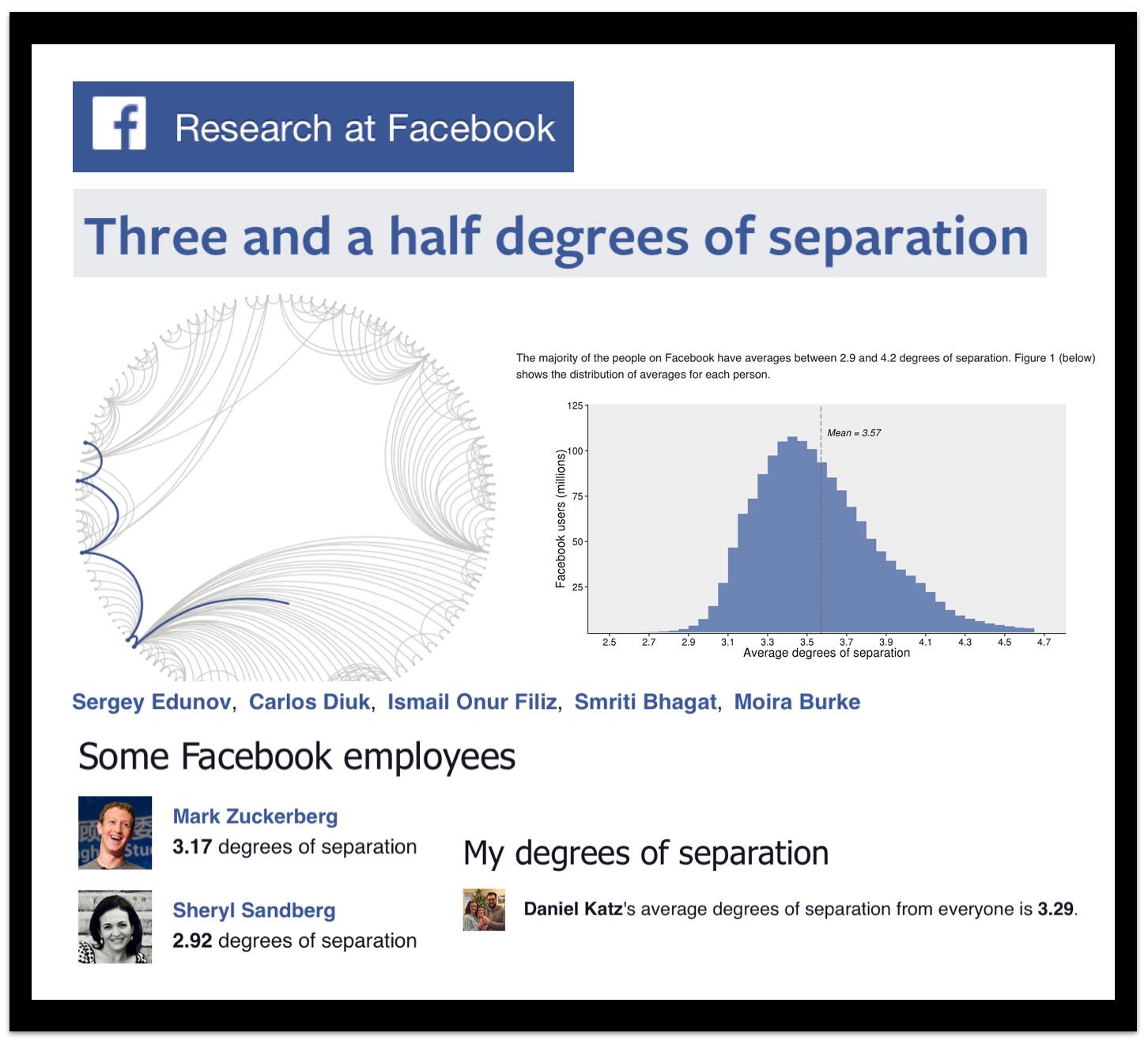

Three and a Half Degrees of Separation (via Facebook Research)

Starting with the original Milgram research in the 1960’s to the Dodds, Muhamad & Watts (2003) paper in Science, the exploration of the social distance between individuals in society has been a topic of interest to many scientists. This new release from researchers at Facebook highlights that social distance is indeed declining. For those who might be interested – I detail in these slides and these slides the history of the small world research.

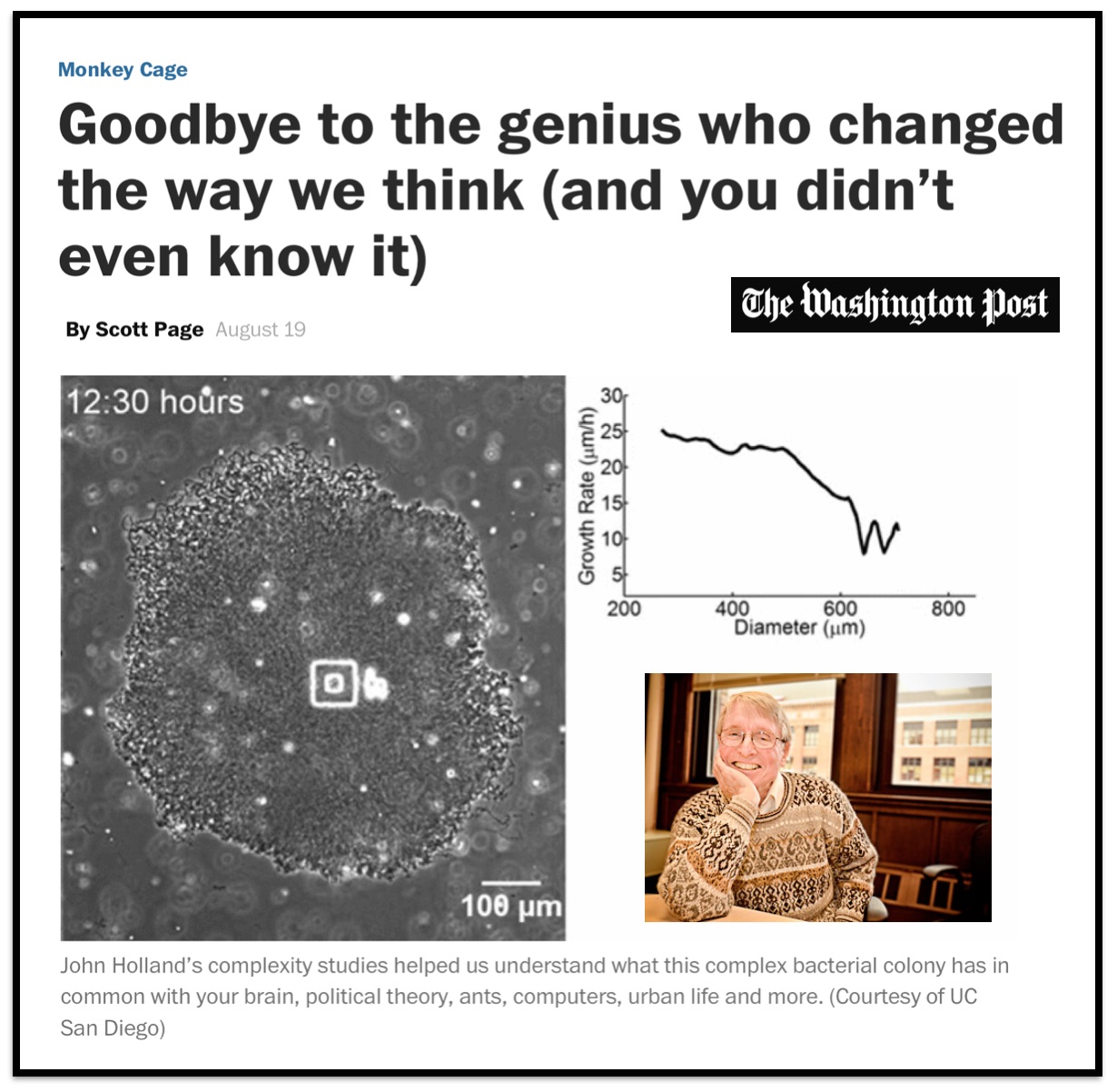

John Holland, Computer Scientist, Psychologist, and Complexity Scientist (1929-2015)

Mike and I had the great pleasure of spending several years at the University of Michigan Center for the Study of Complex Systems where John Holland spent a fair amount of his time. He was a very giving person and of course – a true genius! Rest in peace.

Mike and I had the great pleasure of spending several years at the University of Michigan Center for the Study of Complex Systems where John Holland spent a fair amount of his time. He was a very giving person and of course – a true genius! Rest in peace.

Teaching the Complex Systems Course @ University of Michigan ICPSR Summer Program in Quantitative Methods

This upcoming week and next week I have the pleasure of teaching “Complex Systems Models in the Social Sciences” here at the University of Michigan ICPSR Summer Program in Quantitative Methods. The field of complex systems is very diverse and it is difficult to do complete justice to the range of scholarship conducted under this umbrella in a short survey course. However, we strive to cover the canonical topics such as computational game theory and computational modeling, network science, natural language processing, randomness vs. determinism, diffusion, cascades, emergence, empirical approaches to study complexity (including measurement), social epidemiology, non-linear dynamics, etc. Click here or on the image above to access my course materials!

This upcoming week and next week I have the pleasure of teaching “Complex Systems Models in the Social Sciences” here at the University of Michigan ICPSR Summer Program in Quantitative Methods. The field of complex systems is very diverse and it is difficult to do complete justice to the range of scholarship conducted under this umbrella in a short survey course. However, we strive to cover the canonical topics such as computational game theory and computational modeling, network science, natural language processing, randomness vs. determinism, diffusion, cascades, emergence, empirical approaches to study complexity (including measurement), social epidemiology, non-linear dynamics, etc. Click here or on the image above to access my course materials!